Text-to-Image generation is a remarkable achievement in artificial intelligence that transforms verbal descriptions into aesthetically pleasing visuals using computer vision and natural language processing. Recent developments in deep learning methods and neural networks are primarily to thank for the impressive gains in this technology. We shall examine the intricate workings of Text-to-Image in this article, as well as the underlying systems that enable it.

Neural Language Models

A potent neural language model sits at the heart of text-to-image generation. To comprehend and anticipate the links between words, sentences, and concepts, these models—often based on recurrent or transformer architectures—have been trained on enormous volumes of textual data. The quality and adaptability of text representation have been greatly enhanced by pre-trained models, such GPT-3, making them a crucial part of Text-to-Image systems.

Neural Language Models in NLP

A family of artificial intelligence models known as neural language models is created specifically to comprehend and produce human language. They have made a significant contribution to natural language processing (NLP) tasks and are at the core of numerous applications, such as chatbots, sentiment analysis, machine translation, and text production.

Recurrent Neural Networks (RNNs) and Transformers

Recurrent Neural Networks (RNNs) and Transformer-based models can be widely characterized as the two types of neural language models’ basic architecture.

RNNs (recurrent neural networks)

One of the oldest varieties of neural language models is the RNN. They handle data sequences by preserving a hidden state that stores the knowledge from earlier time steps. As the model reads each word in the input sentence, this hidden state is changed incrementally. The context of the entire sequence is stored in the last hidden state, which is utilized to anticipate the following word.

RNNs can be ineffective at capturing long-range dependencies because of their limitations, such as vanishing or exploding gradients over lengthy sequences.

Transformer-based models

The “Attention is All You Need” paper introduced the Transformer architecture, which completely changed NLP. Transformers use self-attentional mechanisms to directly model the relationships between the words in a sentence. They are superior to RNNs at capturing long-range dependencies, which makes them ideal for a variety of NLP tasks.

Self-Attention Mechanism

An essential component of Transformer-based neural language models is the self-attention mechanism. It enables the model to produce predictions while considering the relative weights of the various words in a sentence. Self-attention takes into account every word in the sentence at once as opposed to just the present hidden condition.

Self-Attention Mechanism in Detail

Each word has three vectors throughout the self-attention process: query, key, and value. The key and value vectors represent the other words in the sentence, while the query vector represents the word that needs to be fixed. Each word is assigned a weight by the attention mechanism, indicating how much consideration it should receive when anticipating the subsequent word in the sequence.

Pre-training and Fine-tuning

Using unsupervised learning, the majority of contemporary neural language models are pre-trained on a substantial corpus of text data. Pre-training incorporates activities like predicting the next word given the previous context (Next Sentence Prediction – NSP) or guessing the missing words in a sentence (Masked Language Model – MLM). These pre-trained models are subsequently refined using supervised learning with task-specific labeled data for a variety of downstream tasks.

Transfer Learning

The capability of neural language models to transfer knowledge from pre-training to downstream tasks is one of its key advantages. Even when trained on comparatively limited task-specific datasets, models can gain by discovering patterns from enormous amounts of heterogeneous data by utilizing the pre-learned representations.

Notable Neural Language Models

Several well-known neural language models are:

- GPT (Generative Pre-trained Transformer) and its subsequent versions, GPT-2 and GPT-3.

- BERT is an acronym for “Bidirectional Encoder Representations from Transformers.”

- A Robustly Optimized BERT Pretraining Approach is known as RoBERTa.

- Extreme MultiTask Learning, or XLNet.

Image Encoder Networks

The image encoder network is a key element in the conversion of text into images. This neural network is in charge of converting the written descriptions into a language that the image generator can comprehend. Typically, to do this, high-level semantic information from the text must be extracted to direct the production procedure.

Attention Processes

In the creation of Text-to-Image, attention processes have been demonstrated to be essential. These processes enable the model to generate the relevant image while concentrating on particular areas of the input text. The model may better connect the textual description with the visual components to provide more cogent and realistic visuals by selectively attending to relevant information.

Text-to-Image Synthesis with GANs

Text-to-image synthesis greatly benefits from the use of Generative Adversarial Networks (GANs). The discriminator and the generator are the two neural networks that make up GANs. The discriminator assesses the veracity of the created images, while the generator seeks to produce visuals from the textual input. The generator learns to produce images that are progressively challenging for the discriminator to distinguish from genuine photos through an adversarial training process, producing visually appealing outcomes.

Learning Cross-Modal Embeddings

Learning cross-modal embeddings, in which both the textual and visual data are represented in the same feature space, is essential for producing text-to-image conversions. The model can translate textual descriptions into cohesive and visually correct representations thanks to these embeddings, which help it understand the connections between words and their visual counterparts.

Learning cross-modal embeddings, in which both the textual and visual data are represented in the same feature space, is essential for producing text-to-image conversions. The model can translate textual descriptions into cohesive and visually correct representations thanks to these embeddings, which help it understand the connections between words and their visual counterparts.

Conditioning Techniques

Conditioning techniques are used to make sure the generated images match certain text-referenced qualities. As an illustration, if the input text refers to a “red car,” the model should generate an image of a red car. Conditioning guarantees that the result accurately matches the specified description and aids in regulating the creation process.

Data Augmentation

During training, data augmentation techniques are frequently used to increase the variety and caliber of generated images. These methods subtly alter the verbal descriptions to provide variations, resulting in a wider selection of images for a given request.

A intriguing and challenging area of deep learning is text-to-image generation, which successfully integrates computer vision with natural language understanding. The magic of converting text into breathtaking visual representations is made possible by the complex interplay of neural language models, image encoder networks, attention mechanisms, GANs, cross-modal embeddings, conditioning strategies, and data augmentation. We may anticipate even more stunning and realistic outcomes as this technology develops, opening up fresh opportunities in a variety of industries, including art, design, and entertainment.

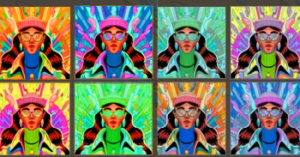

Text-to-Image generation has reached new heights in the realm of artificial intelligence, delivering visually stunning and aesthetically pleasing visuals that align with verbal descriptions. This remarkable achievement is made possible through the interplay of various components, and two key factors play a crucial role in creating great looking images: prompt engineering and prompt search engines.

Prompt Engineering

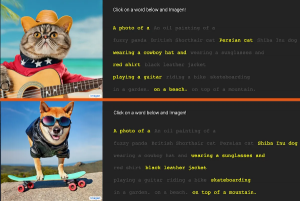

Prompt engineering is an essential process in Text-to-Image generation that involves crafting precise and effective textual prompts to guide the image generation model. The prompt serves as the starting point for the model and determines the nature, style, and content of the final image. Properly crafted prompts can greatly influence the quality and relevance of the generated visuals.

The language used in the prompt should be carefully chosen to convey the desired visual details. Ambiguity in the prompt can lead to unexpected or undesired results. For instance, using generic phrases like “a beautiful scene” may produce subjective interpretations, leading to a lack of cohesion in the generated image.

On the other hand, a well-engineered prompt with specific details like “a serene beach at sunset with palm trees and gentle waves” guides the model to create a cohesive and realistic image that aligns with the given description.

Moreover, prompt engineering can include incorporating style or genre preferences. For instance, if a user wants an image in the style of a watercolor painting, the prompt can be tailored accordingly, influencing the model to generate visuals that emulate the desired artistic style.

Prompt Search Engines

While prompt engineering lays the foundation, ai image prompt search engines such as https://lexica.art/ and http://www.daprompts.com/ play a vital role in the fine-tuning process of the Text-to-Image model. Prompt search engines aim to optimize the prompt to achieve the best possible image output. This process often involves iterative refinement and exploration of various prompts to find the most optimal one.

Prompt search engines utilize optimization algorithms and techniques to adjust and enhance the prompt based on predefined metrics. These metrics can be objective, such as image quality scores, or subjective, incorporating human feedback and preferences. The goal is to guide the model towards generating images that meet the user’s expectations and requirements.

The process of prompt search involves generating multiple candidate prompts, feeding them to the Text-to-Image model, and evaluating the resulting images. By comparing the generated images with the desired outcome, the search engine can make adjustments to the prompt iteratively, aiming to improve the quality and relevance of the visuals.

Importance in Creating Great Looking Images

Prompt engineering and prompt search engines are essential to creating great looking images in Text-to-Image generation for several reasons:

- Control over Image Generation: Well-crafted prompts give users more control over the visual output, allowing them to specify details and style preferences.

- Relevance and Cohesion: Carefully engineered prompts ensure that the generated images are coherent and align with the given description, reducing ambiguity and irrelevant results.

- Customization: Prompt engineering allows users to customize the generated images to suit their specific needs and preferences, opening up a wide range of applications.

- Optimization for Perfection: Prompt search engines fine-tune the output, optimizing it for the best possible image quality based on objective and subjective metrics.

- Enhanced User Experience: By providing users with the ability to fine-tune the output, prompt engineering and prompt search engines lead to a more satisfying and enjoyable user experience.

Prompt engineering and prompt search engines are indispensable components of Text-to-Image generation. Their role in crafting precise and effective prompts and optimizing the visual output ensures that users can create great looking images that align with their imagination and preferences. As these technologies continue to advance, we can expect even more impressive and realistic visual representations, unlocking endless creative possibilities for artists, designers, and anyone seeking to bring their ideas to life.